How to Hire Generative AI Developers: The Real Vetting That Cuts Risk

How to HirePublished on by Iryna Seleman • 8 min read read

- The Role Taxonomy: Getting This Wrong Before You Source

- The four profiles that appear most often in generative AI hiring:

- The Skills That Actually Differentiate GenAI Developers

- LLM API Integration and Context Management

- RAG Architecture and Vector Database Experience

- Agentic Systems and Multi-Agent Orchestration

- MLOps and Observability

- Hallucination Mitigation - The Litmus Test Skill

- The Interview Framework That Reveals Real Production Depth

- The Technical Skills Stack: What to Verify Before the Interview

- Salary Benchmarks and Rate Ranges in 2026

- Where to Find Verified Generative AI Engineers

- FAQ

- Conclusion

The most expensive mistake in GenAI hiring happens before the first interview. Here is how to prevent it - and what the vetting looks like when it's done correctly.

The demand for AI-specialized talent has grown 3.5 times since 2020 and shows no sign of plateauing. Average AI engineer salaries reached $206,000 in 2025 - a $50,000 year-over-year increase. Demand for prompt engineers alone surged 135.8% in the same period. Over 70% of technology leaders now cite AI talent shortages as a critical barrier to their AI initiatives.

The supply constraint is real. But the hiring problem at most companies isn't finding candidates - it's running a process that can tell apart an engineer who has deployed generative AI systems in production from one who has watched the same YouTube tutorials. Hiring an ML engineer when you need an LLM integration engineer is a $185,000 mistake companies make regularly. The roles look similar on a resume. They require fundamentally different skills. And most generative AI job descriptions conflate them before the first interview takes place.

The vetting framework that works focuses on three production signals: how candidates reason about hallucination mitigation, how they make the RAG-versus-fine-tuning architectural decision, and whether their project examples involve systems that ran in production or notebooks that ran on their laptop. Everything else - Python fluency, framework familiarity, model API experience - is table stakes that a resume scan can verify. Real depth lives in the reasoning under pressure, not the technology list.

The Role Taxonomy: Getting This Wrong Before You Source

An AI engineer builds AI-powered products - LLM integrations, RAG pipelines, and AI APIs - focusing on software engineering for AI features. An ML engineer designs and trains machine learning models, manages training pipelines, and optimizes model performance. A data scientist analyzes data to generate business insights.

In 2026, the most valuable hire for most product companies is an AI engineer with strong software engineering fundamentals - not an ML engineer or a data scientist. Over 75% of AI job listings specifically seek domain experts with deep, focused knowledge; generalists face increasing competition and command 30–50% salary premiums below specialists.

The four profiles that appear most often in generative AI hiring:

AI Engineer (LLM Integration): Builds products on top of existing frontier models - GPT-4.1, Claude 4, Gemini, Llama. Their work is integrating LLM APIs, building RAG pipelines, designing prompting strategies, managing context windows, and deploying AI features within existing engineering infrastructure. This is the profile most product companies actually need.

ML Engineer: Trains, fine-tunes, and deploys custom models. Manages training pipelines, handles GPU clusters, optimizes compute costs. You need this profile when your competitive moat requires a proprietary model - not when you're building a product that uses existing frontier LLMs.

Prompt Engineer: Specialists in prompt optimization, system prompt design, few-shot structuring, and chain-of-thought patterns. Often a hybrid role embedded within an AI engineering team rather than a standalone hire at early-stage companies.

LLM Architect: Senior profile that defines the overall model strategy, RAG architecture, cost optimization approach, and AI governance framework. Typically a principal or staff-level engineer who owns the AI infrastructure decisions rather than individual feature builds.

Outsource AI development until you reach $1M+ ARR or Series A. Before that point, hiring senior AI engineers is costly, the hiring process takes up to 45 days, the experience is mediocre, and the role carries high risk.

Pre-vetted AI engineering capacity at $30–$60/hr provides immediate access to senior expertise without the hiring overhead, benefits cost, or long-term commitment until the architecture is stable.

The Skills That Actually Differentiate GenAI Developers

Every generative AI job description lists Python, PyTorch, and LLM experience. Those are entry requirements, not differentiators. The skills that separate production-capable engineers from tutorial-level candidates are specific and require active probing to surface.

LLM API Integration and Context Management

Not all engineers who have "worked with LLMs" have managed the complexity of production LLM integrations: streaming, function calling, structured output, token budget management across long conversations, and graceful fallback when model outputs violate the expected format. LLM API integration includes support for streaming, function calls, and structured output. An engineer who has only made synchronous API calls with simple prompts has not encountered the failure modes that emerge at scale.

The interview signal: ask them to describe a specific token budget problem they solved and the trade-offs they made. Engineers with production experience answer with specific context window sizes, chunking decisions, and the cost implications of different approaches. Engineers without it answer with a general description of token limits.

RAG Architecture and Vector Database Experience

Retrieval-Augmented Generation is now foundational infrastructure rather than a novel technique. RAG architecture includes vector database selection (Pinecone, pgvector, Qdrant), embedding models, chunking strategies, and hybrid retrieval. But knowing the tools is different from understanding the design decisions: how hybrid retrieval outperforms pure semantic search, how the chunking strategy affects retrieval quality across different document types, and how to keep a RAG index fresh at scale.

Traditional coding assessments fall short when evaluating RAG and LLM skills - these are complex, multi-faceted capabilities that blend information retrieval, machine learning, and software engineering in ways that a standard algorithm challenge doesn't surface.

The interview signal: ask for their chunking strategy for a specific document corpus and why they made that choice. The right answer depends on the corpus - legal documents, product documentation, and customer support transcripts each require different approaches. Engineers with production RAG experience provide context. Engineers without it give a generic answer about chunk size.

Agentic Systems and Multi-Agent Orchestration

Agentic systems include tool use, multi-agent orchestration, agent memory, and human-in-the-loop design patterns. As agent frameworks mature, engineers who understand how to design systems that fail gracefully when the underlying LLM returns unexpected output are becoming the critical hire. This is a specific skill: most engineers can wire together a LangChain or LlamaIndex pipeline from documentation. Far fewer can design agent error handling that doesn't require human intervention every time a tool call fails unexpectedly.

MLOps and Observability

Observability for LLM systems includes LLM tracing, cost monitoring, latency profiling, and evals-as-code frameworks like LangSmith or Braintrust. A generative AI developer who can build a RAG pipeline but can't instrument it for production monitoring is building a system that will fail silently. Cost monitoring specifically matters: LLM API costs are per-token and can escalate unpredictably with usage patterns that differ from development assumptions.

Hallucination Mitigation - The Litmus Test Skill

Hallucination is the most cited risk in production GenAI systems and the most revealing skill to probe. Ask candidates: "Describe your approach to minimize hallucinations in a generative AI model during fine-tuning phases." More specifically: if a deployed customer support agent hallucinates product information 3% of the time, how do you diagnose the root cause, and what are three distinct mitigation strategies with different cost-accuracy trade-offs?

Engineers with genuine production experience can name at least two distinct root causes (retrieval failure versus generation failure) and propose strategies that address each differently: grounding via RAG, confidence scoring, output validation against structured schemas, or ensemble approaches that cross-reference model outputs. Engineers who have only worked with LLMs in controlled settings give a single answer that addresses the symptom rather than the cause.

The biggest mistake companies make when hiring generative AI developers is testing for API knowledge instead of product thinking. Anyone can call OpenAI's endpoint - the real skill is knowing when to use a fine-tuned model versus RAG, how to keep hallucinations from reaching production, and how to build an AI feature that users actually trust. At Incode Group, we've shipped AI assistants, voice-to-text tools, and AI-powered analytics across multiple products - and every time, the developers who made the difference were the ones who understood the user problem first and the technology second," notes Oleh Meleshko, Founder & CEO at Incode Group, a JavaScript-focused software development company that builds custom platforms and ships its own AI-powered SaaS products.

The Interview Framework That Reveals Real Production Depth

Most AI developer interviews are inadequate because interviewers don't know what good looks like. The questions that reveal genuine depth are those calibrated to identify engineers who understand LLM system design - not those who have memorized marketing copy from AI company blogs.

Round 1 — System design for production constraints:

"Walk me through how you would design a RAG system for a 10 million document corpus where latency must be under 500ms at the 95th percentile. What are the trade-offs in your chunking strategy?"

This question has no single right answer - which is the point.

Strong candidates reason through the trade-offs explicitly: approximate nearest neighbour search versus exact search, pre-filtering versus post-retrieval re-ranking, caching strategies for common queries, and the chunking approach that balances retrieval precision with token budget.

Weak candidates describe a standard RAG tutorial architecture without addressing the scale or latency constraint.

Round 2 — Debugging a production failure:

"We have a customer support agent who hallucinates product information 3% of the time. How do you diagnose the root cause, and what are three distinct mitigation strategies with different cost and accuracy trade-offs?"

The diagnosis question separates engineers who understand the GenAI stack from those who know the surface.

Root causes differ: the retrieval system returns irrelevant documents (retrieval failure), the model generates plausible-sounding facts not present in the context (generation failure), or the system prompt doesn't adequately constrain the model's behaviour (prompting failure). Each requires a different mitigation.

Engineers who jump straight to "use a higher-temperature setting" or "add more RAG documents" haven't diagnosed the problem - they've applied a generic fix.

Round 3 — Architectural decision-making:

"When would you choose fine-tuning over RAG, and when would you choose neither? Give a real example of each."

This question cuts through candidates who know the concepts but haven't applied them under real constraints. Fine-tuning makes sense when the task requires consistent tone, specialized domain vocabulary, or behaviour modification that prompt engineering can't reliably achieve. RAG makes sense when the knowledge base changes frequently or is too large to embed in a context window. Neither makes sense for many product use cases - zero-shot and few-shot prompting on a frontier model solves the problem more cheaply.

Candidates who recommend fine-tuning for everything, or who dismiss RAG without a reasoned position, are signaling either theoretical bias or limited production exposure.

Round 4 — Graceful degradation design:

"How do you design an agent system that degrades gracefully when the underlying LLM returns an unexpected output format?"

This question reveals whether the engineer has shipped an agent system or only built one for a demo.

In production, LLMs occasionally return output that violates the expected schema - even with explicit format instructions. The right answer includes: output validation before action execution, retry with clarification prompts as a first fallback, deterministic fallback behavior when retries are exhausted, and logging that captures the failure mode for review.

Engineers who haven't shipped agents in production typically describe only the happy path.

The Technical Skills Stack: What to Verify Before the Interview

| Layer | Skills to verify | Verification method |

| LLM APIs | OpenAI, Anthropic, Google Vertex, Bedrock; streaming; function calling; structured output | Take-home project: build a small AI feature with a tool use |

| Prompt engineering | System prompt design; few-shot structuring; chain-of-thought; output format control | Live prompt debugging session with a real failure case |

| RAG | Vector DB selection (Pinecone, pgvector, Qdrant, Weaviate); embedding models; chunking strategy; hybrid retrieval | Architecture whiteboard: corpus-specific design question |

| Agent frameworks | LangChain, LlamaIndex; multi-agent orchestration; tool use; human-in-the-loop | Code review: review an existing agent implementation |

| MLOps | LLM tracing (LangSmith, Braintrust); cost monitoring; latency profiling; CI/CD for AI | Ask: how do they detect silent degradation in a deployed LLM system |

| Infrastructure | AWS/Azure/GCP; SageMaker, Vertex AI; container orchestration; GPU cost optimization | Discuss a specific deployment they managed and its scaling decisions |

The take-home project format that surfaces the most signal: a 4–6 hour project building a small AI feature in the candidate's preferred stack, followed by a code review session where they explain their architectural decisions and trade-offs. The code review reveals more than the code itself - how engineers talk about their choices distinguishes those who deliberated from those who guessed.

Salary Benchmarks and Rate Ranges in 2026

Understanding the market before you post a role prevents both over-scoping (requiring frontier skills at mid-level compensation) and under-scoping (paying senior rates for integration work).

| Profile | Salary (US full-time) | Hourly rate (US) | Salary (Europe full-time) | Hourly rate (Europe) |

| AI Engineer (LLM integration, mid) | $150,000–$180,000 | $75–$110/hr | $100,000–$121,000 | $45–$70/hr |

| AI Engineer (senior, production systems) | $180,000–$230,000 | $110–$150/hr | $121,000–$154,000 | $70–$90/hr |

| LLM fine-tuning specialist | $220,000–$280,000 | $150–$250/hr | $147,000–$188,000 | $80–$130/hr |

| Prompt engineer | $130,000–$170,000 | $60–$100/hr | $87,000–$114,000 | $30–$50/hr |

| LLM Architect (principal/staff) | $250,000–$312,000 | $150–$250/hr | $168,000–$209,000 | $100–$148/hr |

| MLOps engineer | $170,000–$220,000 | $100–$180/hr | $114,000–$147,000 | $57–$111/hr |

The 45-day average time-to-hire for senior AI engineers means budget planning should consider the role being vacant during the search period. Organisations with formalised AI hiring processes fill roles 40% faster and report significantly higher retention than those relying on ad-hoc recruitment. For most companies, investing in a structured process—such as defined skill criteria, staged technical assessments, and calibrated interview questions - achieves the 40% reduction in time-to-hire within the first role filled.

Where to Find Verified Generative AI Engineers

The 45-day timeline and $206,000 average salary make a clear case for channels that reduce screening overhead rather than amplifying it. Standard developer hiring processes are not sufficient for AI roles - they require deeper technical vetting, domain-specific assessments, and often a global talent search.

For product companies at Seed or Series A, pre-vetted platforms with AI-specific screening are the most efficient path. Cortance's pool of AI engineers and LLM developers is screened for hands-on experience building production-grade LLM applications - RAG pipelines, enterprise AI chatbots, fine-tuned domain models, AI copilots, and MLOps stacks on AWS, Azure, and GCP. Engineers have an average of six years of experience with production AI systems, and all are middle-to-senior level.

For companies that have reached Series A and are building a permanent AI engineering function, the structured hiring process above, combined with a pre-vetted sourcing channel to compress the search phase, produces the strongest combination of speed, quality, and retention.

FAQ

- AI vs ML engineer for generative AI? An AI engineer builds AI-powered products: LLM integrations, RAG pipelines, AI APIs, and agentic workflows using existing frontier models. An ML engineer trains, fine-tunes, and deploys custom models - managing training pipelines and GPU infrastructure. Most product companies building on top of GPT-4, Claude, or Gemini need an AI engineer, not an ML engineer.

- What technical skills should a generative AI developer have in 2026? Production-level skills in LLM API integration (OpenAI, Anthropic, Google Vertex, AWS Bedrock), RAG architecture with vector databases (Pinecone, pgvector, Qdrant, Weaviate), prompt engineering including system prompts and few-shot structuring, agentic system design with LangChain or LlamaIndex, MLOps for LLM observability (LangSmith, Braintrust, cost and latency monitoring), and cloud infrastructure on AWS, Azure, or GCP. Python is the baseline.

- How much does it cost to hire a generative AI developer in 2026? Average AI engineer salaries reached $206,000 in the US in 2026. Contract rates range from $75–$110/hr for mid-level AI engineers to $150–$250/hr for LLM fine-tuning specialists and architects. Pre-vetted contract platforms provide access to senior AI engineers at $45–$70/hr for European talent and $50–$150/hr for global talent, with no long-term commitment required until the architecture stabilizes.

- What is RAG and why is it important for generative AI developers? Retrieval-Augmented Generation (RAG) is the architecture that grounds LLM outputs in factual, up-to-date information by retrieving relevant documents from a vector database before generating a response. It reduces hallucination, enables private data integration without fine-tuning, and supports knowledge bases that change frequently. Most enterprise GenAI applications use RAG as their core architecture.

- RAG vs fine-tuning in generative AI? RAG retrieves external documents at inference time to ground the model's response in specific, current information - no model modification required. Fine-tuning trains the model on additional data to change its behaviour, tone, or domain knowledge - requiring compute, labelled data, and expertise in training pipelines. RAG is faster, cheaper to maintain, and suited for knowledge bases that change frequently. Fine-tuning is more appropriate when the task requires consistent specialized behavior that prompting can't reliably achieve. Most product use cases are better served by RAG or zero-shot prompting than by fine-tuning.

Conclusion

Hiring a generative AI developer in 2026 is a specific technical challenge that generic software engineering hiring processes aren't equipped to solve. The role taxonomy problem - confusing AI engineers with ML engineers before the search starts - is the highest-cost mistake, and it's entirely preventable. The vetting problem - running interviews that don't distinguish production depth from tutorial familiarity - is the second, and it's solvable with four well-calibrated questions.

The companies filling AI roles 40% faster are the ones that invested in a structured process: defined skill criteria, staged technical evaluation, and sourcing channels that compress the 45-day average rather than extending it. For companies that need production-ready AI engineering capacity while the hiring process runs - or before the architecture is stable enough to justify a full-time hire - a pre-vetted sourcing channel with AI-specific screening closes the gap in days rather than quarters.

Related Articles

How to Hire a Blockchain Developer: Technical Vetting, Role Clarity, and the Fastest Path to a Verified Engineer

Senior blockchain developers are rare. The harder problem is verification - a strong resume and a weak engineer look identical.

Read article

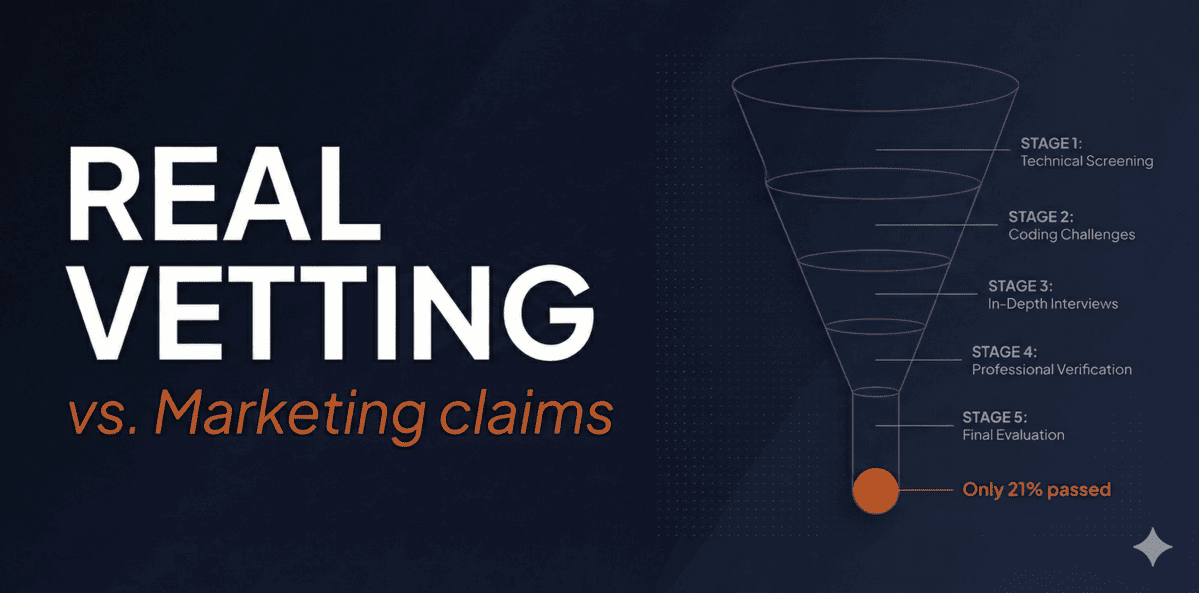

How to Hire Pre-Vetted Developers - and Tell Real Vetting from a Marketing Claim

The promise is consistent across every platform. The execution behind it is not.

- #howto

- #smarthiring

- #findadeveloper

- #hire

- #Vetted Developers

- #Pre-verified Developers

- #Outstaffing

- #Team Augmentation